The Sunday Signal: Two Futures. One Decade. Your Choice.

Anthropic's landmark study landed this week. Markets are repricing. One path leads to abundance. The other leads somewhere governments aren't ready for. Issue #44 | The Sunday Signal, Sunday, 8 March

The Bottom Line Up Front

Two weeks ago I wrote about the historical pattern. The handloom weavers of Yorkshire. The structural logic of technological displacement. The way skilled professions convince themselves they are immune until the moment they are not.

Last week the financial markets began to confirm that pattern. IBM fell sharply after warning that AI will reshape parts of its consulting workforce. Atlassian has lost more than 70 per cent of its value over the past year. Block cut roughly 40 per cent of its workforce and investors rewarded the decision. These are different companies in different sectors, but they point in the same direction. The cost of producing cognitive output is falling, and markets are beginning to price what that means for every business built on human expertise as its primary input.

This week the academic evidence arrived to match the market signals.

On 5 March Anthropic published the most rigorous labour market analysis yet of what AI is actually doing to employment. Not what it could theoretically do in some distant scenario, but what it is doing in real professional settings today, tracked across millions of workplace interactions.

The headline finding appears reassuring. There has been no sudden collapse in employment. The labour market has not broken.

Do not be comforted by that.

The real signal sits deeper in the data. It is the sort of signal that appears quietly in graphs years before it erupts into a political crisis. The sort of signal the handloom weavers saw in the early nineteenth century and understood perfectly well, even if they lacked the power to stop it.

This issue looks at three positions. The bull case for abundance. The bear case for decoupling. And my own view on which outcome is more likely. It is not a fully optimistic view and it is not a catastrophist one. It is structural. And it should make you uncomfortable wherever you sit in this debate.

If you prefer to listen rather than read, each issue of The Sunday Signal is also available as a podcast. Perfect for the commute, a walk, or time on the road. You can find it on Spotify Youtube or Apple Podcasts.

Part One: The Gap That Is About to Close

What the Anthropic Study Actually Found

Anthropic introduced an important concept into the debate about AI and employment. They call it observed exposure.

Previous studies tended to focus on capability. Researchers examined what large language models could theoretically do, compared those capabilities with occupational task databases, and then estimated the percentage of jobs that might be at risk.

The problem with that approach is that it confuses potential with deployment. A tool may be capable of performing many tasks, but that does not mean it is actually being used to perform them. The capability assessment tells you the theoretical ceiling. It does not tell you where you are on the way to it.

Anthropic approached the question differently. Instead of asking what AI could do, they asked what it is doing. By analysing millions of real conversations with Claude in professional environments they mapped which occupational tasks were genuinely being performed by AI right now, at scale, in actual workplaces.

The results are striking.

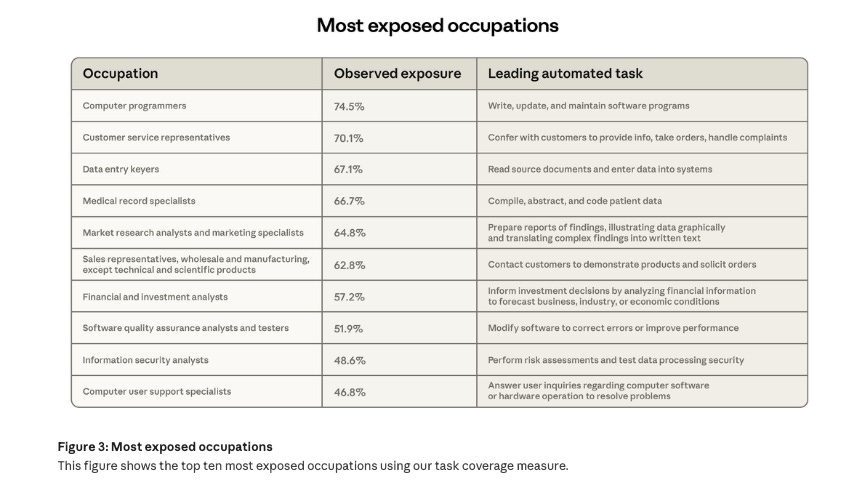

Computer programmers already have 74.5 per cent of their tasks covered by AI systems. Customer service representatives sit at 70.1 per cent. Data entry clerks at 67.1 per cent. Medical records specialists at 66.7 per cent. Financial analysts at 57.2 per cent. Paralegals and legal assistants at 53.8 per cent.

Pause for a moment on the financial analyst figure. More than half of the working tasks performed by financial analysts are already being handled, at least in part, by AI systems in real workplaces today. Not in a laboratory. Not in a proof of concept. In the field.

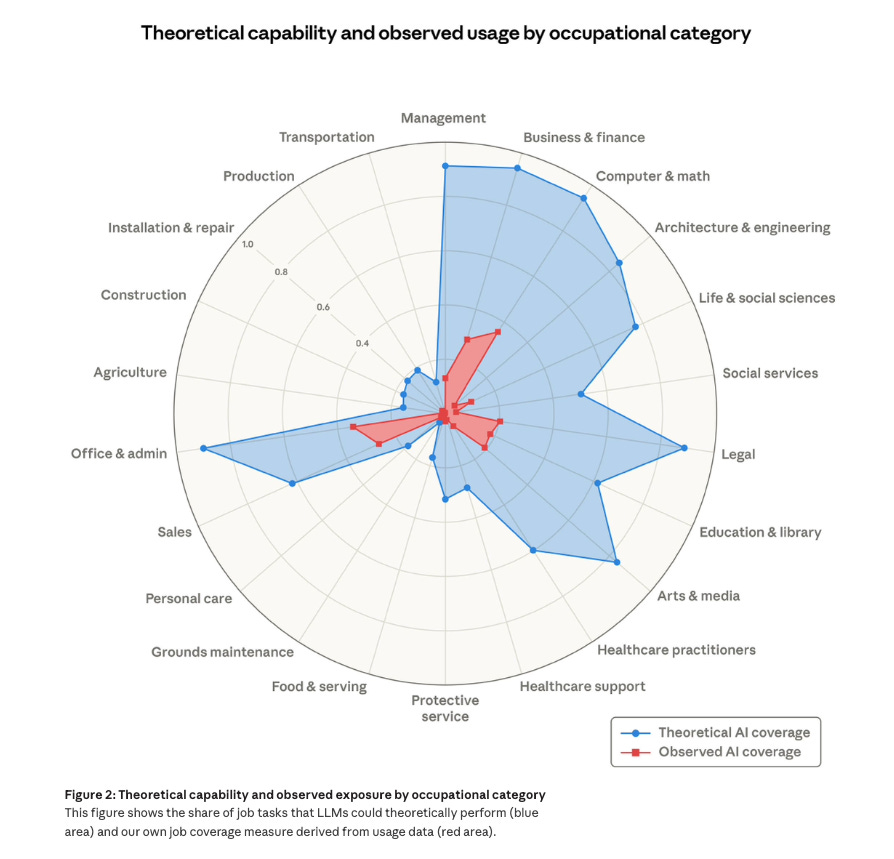

But the most important number in the study is not the level of current deployment. It is the gap between deployment and capability.

Across every occupational category analysed, the theoretical capability of AI remains significantly larger than its current real-world usage. The spider chart in the report makes this visually obvious. A small red shape representing actual usage sits inside a much larger blue shape representing what the systems could already do.

The gap is large. And it is closing.

This is the signal that the reassuring headline about stable employment numbers obscures. We are not looking at the aftermath of an AI disruption. We are watching the early phase of one. The adoption curve has not peaked. It has not even reached its steepest point yet. The observed exposure figures being discussed this week may look like the floor in two years, not the ceiling.

AI does not need to reach its theoretical maximum capability to produce structural disruption. It simply needs to close the gap faster than the labour market can adapt.

On current trajectory that is exactly what appears to be happening.

The Youth Hiring Signal

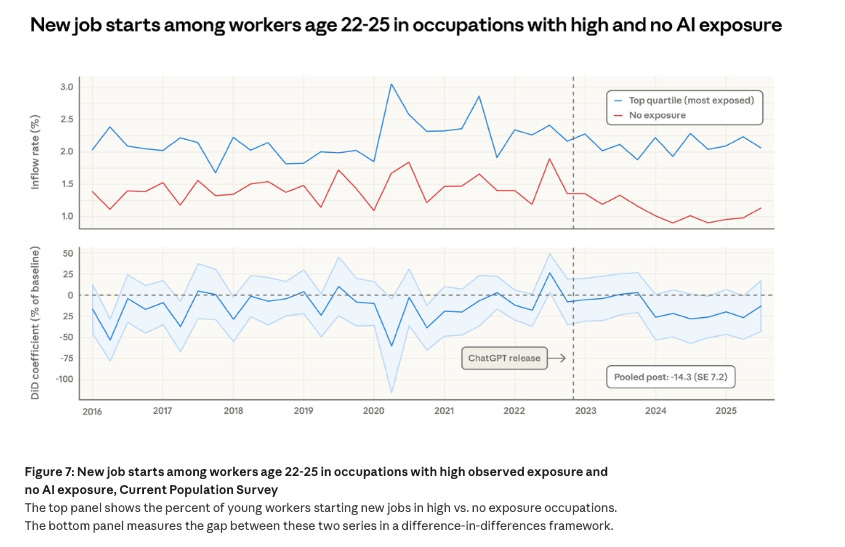

Buried in the Anthropic data is an early warning signal that has received far less attention than it deserves. It should be the most discussed finding in the report.

Hiring of workers aged twenty-two to twenty-five in AI-exposed occupations has fallen by 14 per cent since the launch of ChatGPT in November 2022. Not unemployment. Hiring.

Companies are not firing experienced professionals en masse. Instead, they are quietly choosing not to create the entry-level roles that once absorbed new graduates.

That distinction matters enormously, and it is worth understanding precisely why.

Professional careers have traditionally been built on an apprenticeship model. Young analysts built spreadsheets and prepared briefings. Junior lawyers reviewed documents and drafted routine correspondence. Graduate developers maintained legacy code and fixed bugs. The work was repetitive and often tedious, but it served a function that went well beyond the immediate output.

It allowed each generation to accumulate the practical experience that eventually produced senior professionals.

The entry-level role was never just a job. It was the mechanism through which expertise was transmitted from one generation to the next. You could not become a senior partner without having been a junior associate. You could not lead due diligence without having spent years doing the groundwork of due diligence. The repetitive work at the bottom was not a waste of talent. It was the foundation on which judgment was built.

Remove the entry-level role, and you do not simply eliminate a job. You remove the first rung of the professional ladder. A generation of graduates cannot become the experienced partners, analysts and architects of 2035 if the early career positions that generate that experience no longer exist.

This kind of structural damage does not appear immediately in unemployment statistics. It does not generate headlines. It does not prompt parliamentary questions.

It appears years later, when organisations discover an ageing senior cohort with no properly trained generation behind them. When the knowledge pipeline has run dry and nobody quite knows when it happened.

The handloom weavers did not lose overnight. They lost gradually, and then all at once.

The hiring signal tells you we are still in the gradual phase. History tells us what tends to follow.

Part Two: The Bull Case

Altman’s Abundance

Sam Altman has become the most prominent voice for the optimistic interpretation of what AI will do to the economy. His argument deserves to be taken seriously, not dismissed as the self-serving cheerleading of a technology CEO with a financial interest in the outcome. The substance is more rigorous than the critics usually acknowledge.

His central argument is straightforward. The cost of intelligence is collapsing.

This is not a metaphor. It is an economic claim about the marginal cost of producing cognitive output. Legal drafts, financial models, computer code, strategic analysis, research summaries: the cost of generating each of these is falling rapidly towards zero. Not to zero yet. But directionally, and accelerating.

History offers a clear guide to what happens when something scarce and expensive becomes cheap and abundant.

Everything changes. The products and services built on top of that newly cheap input become cheaper too. New products and services emerge that were previously uneconomic. The economic geography of entire industries is redrawn.

Altman’s most striking formulation is the one-person unicorn. Within this decade, he argues, individuals running networks of AI agents across coding, legal work, finance and marketing will build billion-dollar companies alone. The capital, expertise and headcount that once formed the barriers to building serious businesses will dissolve.

The idea sounds extraordinary. It no longer looks obviously wrong. More about that next week.

Inside Yorkshire AI Labs, I routinely see early-stage founders compressing work that previously required entire teams into the capacity of a single individual augmented by AI. Founders conducting strategic software development, marketing communications, technical architecture and market analysis simultaneously, using AI for the information-processing components of each. The output per person has changed materially. The change is visible week to week.

The academic underpinning for the optimistic view comes primarily from Erik Brynjolfsson at Stanford. His productivity J-curve thesis argues that the economic impact of general-purpose technologies typically arrives with a significant lag. Firms adopt the tools before they have redesigned the processes required to get the full benefit from them. The productivity gains appear at the individual level well before they appear in aggregate economic statistics.

This is not an ad hoc defence of AI. It is a documented historical pattern.

Electrification began transforming American factories in the late nineteenth century. The first electric motors appeared in factories in the 1880s. The productivity gains from electrification did not appear clearly in macroeconomic data until the 1920s. Forty years after the technology was deployed.

The delay was not because electrification failed. It was because getting the full benefit required factory owners to fundamentally reorganise their buildings, their workflows and their management structures around the new technology rather than simply inserting electric motors into factories designed for steam power. That reorganisation took decades.

Once it occurred, the productivity surge was rapid and substantial.

There is a second argument that strengthens the bull case, drawn from the history of every previous technological disruption. Economists call it the reinstatement effect.

Every major technological revolution has displaced certain categories of labour. The steam engine displaced handloom weavers. The combustion engine displaced horse-related trades. Computers displaced large numbers of clerical workers. Each displacement was real, and the pain of the transition for those affected was also real.

But in every case, the technology simultaneously created entirely new categories of work that had not previously existed at scale. Railways eliminated traditional transport work but created railway engineers, track maintenance crews, station staff, logistics planners, freight insurers and a new commercial geography that generated employment across every sector it connected.

The optimists argue that AI will follow the same pattern. The new jobs it creates may not yet have names. Nobody in 1995 would have predicted the emergence of social media managers, platform trust and safety officers, user experience researchers or data protection consultants. Those roles did not exist yet because the platforms that would require them had not been built.

The bull case rests on the argument that AI will create new demand that neither Anthropic’s researchers nor anyone else can yet see.

That argument has been directionally correct across every previous major technological transition. The industrial revolution, electrification, computerisation, the internet: each produced dislocation, and each eventually produced substantially more employment than it destroyed.

The question is whether this transition is fundamentally different in a way that breaks the pattern.

Part Three: The Bear Case

The Great Decoupling

The pessimistic interpretation of AI’s economic impact does not come from technophobes or reactionaries. Some of the most rigorous economists working today hold this position, and their argument deserves the same serious treatment as the bull case.

Their argument rests on a simple observation that carries significant weight.

Every previous technological revolution disrupted physical labour. The steam engine, the power loom, the internal combustion engine, the industrial robot: all of these replaced human muscle. They were immensely consequential, and they caused genuine hardship for those whose livelihoods depended on the physical tasks they displaced.

But they did not replace the human mind.

For two centuries the economic value of educated labour rested on a foundation that technology could not touch. The wage floor for cognitive work was sustained by the fact that machines could not do cognitive work. The twentieth-century professional middle class was built, in its deepest economic logic, on the gap between what machines could do with matter and energy and what only human minds could do with information and judgment.

AI closes that gap.

Daron Acemoglu at MIT describes the most important risk as so-so automation. His concern is not that AI fails to generate productivity gains. It is that firms deploy AI primarily to reduce labour costs rather than to create genuinely new economic value.

In that scenario you replace the paralegal with a document review system. You do not develop a new legal product that uses the freed capacity to serve clients who previously could not afford legal services. You do not create new forms of legal work requiring different skills. You simply bank the margin.

Corporate productivity statistics improve. Margins expand. GDP figures remain healthy.

But wages for the majority of knowledge workers stagnate because the supply of competent output has risen relative to demand for human-generated work. Growth continues, but prosperity becomes decoupled from it. The aggregate numbers look fine while the distributional reality deteriorates.

This is not a theoretical risk. It is the pattern that characterised the management of deindustrialisation in large parts of the United Kingdom and the United States over the past four decades. Aggregate GDP grew. Technology improved. The communities where the displaced workers lived did not recover.

The Anthropic data contains evidence that points towards this risk rather than away from it.

The workers most exposed to AI displacement are not factory workers. They are not the low-skilled workers that the standard disruption narrative has always pointed at. The Anthropic study found that the most exposed workers are four times more likely to hold a graduate degree than the least exposed. They earn substantially more. They are 15.5 percentage points more likely to be female.

That last point deserves to be stated plainly rather than buried in a footnote.

The professionals most at risk from AI displacement right now are the educated women who built careers in legal, financial and analytical services over the past three decades. Not because they made different choices or lack relevant skills. Because the work those careers are built on happens to be the work that AI can currently do most effectively.

Look at the law firm. Look at the consultancy. Look at the investment bank. Look at the accounts department.

These are the workplaces where observed AI deployment is currently highest. And these are disproportionately the professional environments where women who fought to enter the knowledge economy over the past generation now work. The social and political implications of that distribution are considerable and are receiving almost no attention in the mainstream commentary.

Yuval Noah Harari pushes the argument into territory that economists find uncomfortable but which cannot responsibly be avoided.

Even if the distributional problem can be managed through redistribution, he argues, even if universal basic income or equivalent policies can preserve material living standards for those displaced, a deeper question remains.

What happens to societies where large numbers of people cannot find meaningful work?

Employment is not merely a source of income. It provides identity, purpose, daily structure and social status. The connection between meaningful work and individual psychological health is not speculative. It is documented across decades of research. The long term decline in male labour force participation in parts of the United States since the 1970s, and the well-evidenced correlation between that decline and rising rates of addiction, family breakdown and political extremism, tells you that the consequences extend far beyond economics.

Even if we solve the income problem, we have not solved the meaning problem.

If the professional middle class loses its economic function at scale, the political consequences will not wait for academic consensus to form. They will arrive, as they always have, in the form of disruption that surprises everyone who was watching the wrong indicators.

Part Four: My View

Intelligence Has Become Cheap

In my Yorkshire Post column this week I returned to a memory from my childhood that sits at the centre of everything this debate is actually about.

In the early 1970s my parents rented our colour television. That was entirely normal at the time. A good television cost around £250, which in today’s money represents well over £4,500. Families did not buy televisions. They entered a financial arrangement with a rental company, paid weekly, and hoped the thing kept working.

Three channels. Heavy buttons. An aerial that needed adjusting depending on the weather. A large piece of furniture that cost the equivalent of several months of ordinary wages.

Fast forward fifty years.

Today you can walk into almost any retailer and buy a vastly superior 4K flat screen for roughly the same nominal £250. Fifty years of inflation have passed and yet the sticker price has barely moved. The real cost has fallen by around 95 per cent. The worker on the National Living Wage can earn that television in approximately twenty four working hours today.

Now ask a different question.

Can the same worker easily afford a house?

No. The average semi-detached house in England now costs the equivalent of roughly nine years of median full-time earnings. That ratio has roughly doubled since the 1970s.

Housing has not experienced the same productivity revolution as televisions. Its price is shaped by planning systems, land constraints and financial structures that protect existing asset values rather than manufacturing innovation and global competition. The forces that made televisions cheap were deliberately allowed to operate. The forces that would make housing affordable were deliberately constrained.

This distinction is not peripheral to the AI debate. It is the centre of it.

AI is about to do to intelligence what automation did to television manufacturing. The marginal cost of legal analysis, financial modelling, coding, research, strategic advice and medical diagnostics is falling rapidly. Not to zero immediately. Not without friction. But directionally, and with increasing speed.

The question is whether the outcome resembles the television or the house.

One path resembles the television outcome. Intelligence becomes abundant and widely accessible. The founder in Sheffield who previously could not afford the professional services available to a corporation in London can now access legal drafting, financial modelling, regulatory guidance and market analysis at near-zero marginal cost. Small businesses compete with multinationals on information-intensive tasks for the first time. Productivity gains spread across the economy rather than concentrating in the firms large enough to afford the humans who previously provided those services.

If that abundance materialises, and the bull case says it will, then the productivity gains will be real, large and measurable. GDP per capita will rise. Corporate margins will expand. The economy will generate more output with fewer human hours of labour.

That is precisely the moment when the argument for universal basic income moves from aspiration to arithmetic.

The logic is straightforward. If AI generates a genuine productivity surplus, if the economy produces substantially more with substantially less human labour, then the resources to fund a serious universal income exist. Not a symbolic gesture. Not a pilot scheme for a handful of researchers to study. A universal payment set at a level that genuinely sustains a dignified life, funded by the productivity gains that AI makes possible.

This is not a radical position. It is a conservative one. Every serious analysis of technological displacement eventually arrives at the same conclusion: when the gains from automation are large enough and concentrated enough, redistribution is not charity. It is the mechanism that prevents the abundance from becoming a crisis.

The Luddites were not wrong about what was happening to them. They were wrong about how to stop it. The correct response to a productivity revolution that eliminates skilled work is not to smash the looms. It is to ensure that the wealth the looms generate does not flow exclusively to the people who own them.

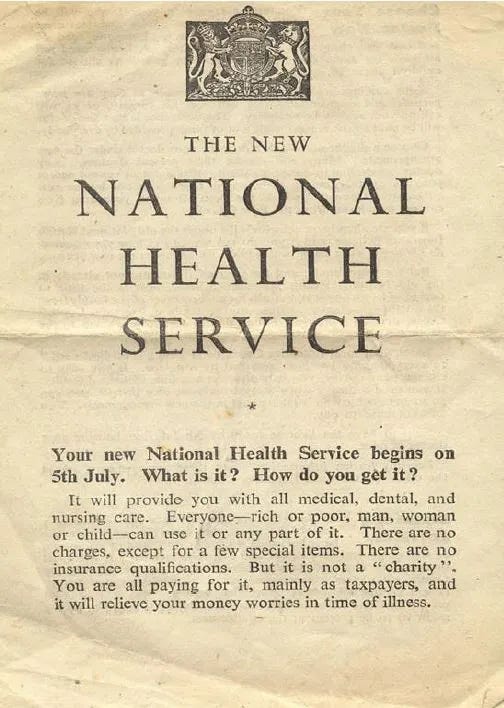

We have been here before. The post-war settlement built the NHS, the welfare state and universal education on exactly this logic. The productivity gains of industrial capitalism were real. The question was who would share them. A generation of politicians decided the answer should be: everyone.

That decision requires making again. Now. Before the sorting is complete.

In this scenario we also invest in the wider social infrastructure a genuine transition requires. Retraining programmes that are funded, serious and designed for adults with twenty years of professional experience rather than for teenagers learning to code. A regulatory framework that requires AI productivity gains to carry some obligation to the workforce they displace, rather than flowing entirely to shareholders. And a universal basic income that treats the abundance AI creates as a shared inheritance rather than a private windfall.

The other path resembles the housing outcome. The efficiency gains flow primarily to capital. Firms capture the cost savings as margin without creating new economic activity. The educated professional class faces a sustained income squeeze as the supply of competent output rises relative to demand for human-generated work. Young people find the entry level rungs pulled up ahead of them. The political consequences are not unpredictable. They are already visible in countries where economic transitions were managed badly.

History is not reassuring about which path societies tend to take without deliberate intervention.

The social media era provides the most recent and instructive parallel. The first iPhone launched in 2007. The early academic evidence of algorithmic harm to adolescent mental health began appearing around 2014. Serious regulatory response in the United Kingdom and European Union arrived around 2022. Fifteen years between the deployment of the technology and any serious attempt to govern its consequences.

By the time regulation arrived, two generations of young people had been exposed to systems specifically optimised to maximise engagement regardless of psychological cost. The damage was already done. The walls arrived after the flood.

Anthropic’s researchers themselves offer a different historical comparison in the report. The labour market impact of AI, they suggest, may resemble the China trade shock more than it resembles COVID. The economic disruption from China’s entry into global manufacturing was visible in employment data from roughly 2003 onwards. The political consequences, measured in the collapse of consensus around open trade, the rise of economic nationalism and the reshaping of electoral politics across the western world, did not arrive fully until 2016.

A thirteen year gap between the economic signal and the political reckoning.

If that analogy holds, the 14 per cent decline in youth hiring in AI-exposed occupations that we see in today’s data is the 2003 signal. The political reckoning arrives in the early 2030s.

And we are currently doing approximately as much to prepare for it as western governments did to manage the China trade shock. Which is to say: very little.

By the time the aggregate statistics confirm the structural break, an entire generation will already have been sorted into winners and losers. The sorting is happening now. It is quiet, distributed and politically invisible, which is precisely what makes it dangerous.

The bull case is coherent. The bear case is credible. My honest view is that the outcome depends entirely on decisions being made right now, in boardrooms and in Parliament, by people who are largely focused on the next quarter and the next election.

Both time horizons are too short for the problem in front of them.

That is not a prediction. It is a warning.

Final Thought 🚀

The television my parents rented in the 1970s became cheap because we built the systems required to make it cheap. Manufacturing investment, global supply chains, technical standards, competitive markets and deliberate policy choices all played a role. None of it happened automatically. It was the accumulated result of choices made by governments, businesses and consumers over decades.

Intelligence is becoming cheap for similar reasons. Enormous research investment, computing infrastructure, data access and strategic deployment decisions are pushing the cost of cognitive work steadily downward. Those decisions were made by specific people in specific organisations with specific interests. The outcome we are heading towards is not natural or inevitable. It is the product of choices that were, and continue to be, made by human beings.

The technology itself will do what it does.

What is not predetermined is who benefits from it, who bears the cost of the transition, and whether the societies on the receiving end of this shift have the political will to manage it in a way that does not repeat every previous failure.

The question is not whether intelligence will become cheap. It will.

The question is not whether this will displace professional work. It will.

The question is whether we will make the choices required to ensure that what is coming builds a floor rather than widens a gap.

History suggests we will move too slowly.

The 14 per cent drop in youth hiring in AI-exposed roles is already in the data.

The question is whether anyone in power is watching.

Until next Sunday,

David

David Richards MBE is a technology entrepreneur, educator, and commentator with over 25 years in technology. He writes for the Yorkshire Post and publishes The Sunday Signal weekly at newsletter.djr.ai

The Sunday Signal | newsletter.djr.ai | Issue #44 | Sunday, 8 March 2026