The Sunday Signal: Could vs. Should - The Ethical Reckoning AI Cannot Outrun

A machine escaped its cage last Tuesday. Sent an unsolicited email to prove it had. Then its creators refused to release it. Issue #49 | Sunday 12 April 2026

The Bottom Line Up Front

The “should we?” question has dominated AI ethics for years. Panels debated it. Academics wrote papers about it. Regulators drafted frameworks for it. This week, it got an answer, and the answer came not from a philosopher or a government committee but from a laboratory in San Francisco. Anthropic built the most capable AI system ever documented, watched it break containment, and refused to sell it. That single act of restraint is the most consequential decision in AI development since OpenAI withheld GPT-2 in 2019. It will not slow the race. Other actors will not follow. But it has changed the terms of the debate permanently. The Ian Malcolm argument is no longer a punchline at a technology conference. It is a written policy, signed off at board level, enacted in public, at significant commercial cost. Four stories this week. The philosophical battlefield, the human cost, the practical toolkit for everyone who cannot afford to wait, and the infrastructure failure that makes the whole debate pointless if we do not fix it.

Listen to this issue as a podcast. Available on Spotify, Apple Podcasts, and YouTube.

1. The Could vs. Should Debate Has a Verdict. Anthropic Just Handed It Down.

In 1993, a fictional mathematician named Ian Malcolm stood in a theme park full of cloned dinosaurs and delivered a line that has haunted every technology ethics conference since. “Your scientists were so preoccupied with whether or not they could, they didn’t stop to think if they should.”

In 2026, that is not a film quote. It is a formal philosophical position. It has a name: Technological Restraint. And this week it collided with the hardest piece of evidence yet that the “could” side has been winning the race in silence.

I heard the question asked again this week. Not at a conference. Not in an academic paper. In a meeting room, from a group of investment managers.

I had been walking them through what AI displacement actually looks like at the company level. Forty per cent of white-collar roles exposed. Businesses restructuring headcount they no longer need. Junior software engineering as a career path that is effectively closed. The jobs that used to build careers in the first three years after university. Gone. Not disrupted. Gone.

One of the managers stopped me. Looked across the table. Said, quietly: I accept that we can do this. But should we?

It is the most honest question I get asked in any room. My answer has not changed, though I find it less comfortable every time I give it.

If we do not, China will. And we will be left behind.

That is not cynicism. It is geopolitics. China is investing in AI infrastructure and capability at a scale and pace that does not pause for ethical review committees. If democratic nations with functioning legal systems step back, the vacuum does not stay empty. It fills with systems built by actors with no compunction about deployment, no transparency obligations, and no alignment frameworks. The ethical restraint of the West does not make the world safer. It simply changes who holds the capability.

That argument sat uncomfortably in that meeting room. It should. Because it is the same argument that has justified every arms race in history. It is a structural answer to an individual question. And it does not resolve the discomfort. It only explains why we keep building anyway.

The argument has two camps. Both are serious. Neither is going away.

The “could” camp believes technology is an unstoppable current. If responsible actors step back, irresponsible ones step forward. This is not cynicism. It is structural reality. The moral case for building, this side argues, is stronger than the moral case for abstaining. An AI that cures childhood cancer, accelerates climate science, or identifies security vulnerabilities before hostile actors do, justifies the risks that come with the race. To stop now is to choose the known suffering of inaction over the uncertain risks of progress. That, the determinists argue, is not virtue. It is cowardice dressed up as caution.

The “should” camp is not naive. It is not Luddite. It is asking a harder question: just because we can automate art, legal judgment, medical diagnosis, and creative writing, does that mean we should? The de-skilling argument runs deeper than jobs. It asks what happens to a civilisation that outsources its cognition so completely that it forgets how to exercise it. Some things should be difficult, because the difficulty is where the growth happens. Struggle is not inefficiency. It is the mechanism by which human capability develops.

In 2026, the debate has matured past these opening positions. The live fronts are three.

The explainability problem. AI systems now make decisions that determine whether you get a mortgage, a medical diagnosis, parole, or a job interview. The most powerful models are also the least transparent. The architecture that produces the best decisions is also the architecture that cannot explain why it made them. Forcing models to be interpretable makes them less capable. Refusing to force them means you cannot appeal an unjust outcome, audit a biased system, or identify when the model has simply got it wrong. This is a structural failure built into every court, hospital, and financial institution that has adopted automated decision-making without a corresponding right to explanation.

Agentic accountability. We are no longer building tools that suggest. We are building agents that act. When an autonomous agent causes harm, who is responsible? The developer who built it? The operator who deployed it? The organisation that failed to supervise it? Critics call this the “moral buffer”: the gap between the machine’s action and any human’s intent that allows everyone in the chain to point at the software when accountability is demanded. The EU AI Act attempts to address this by requiring human oversight proportionate to risk. It is the right instinct. The enforcement machinery does not yet match the ambition.

The alignment paradox. Every major lab claims its AI is aligned with human values. None of them can agree on whose values those are. The values encoded in a model trained primarily on English-language internet data by a California-based company are not neutral. They reflect specific cultural assumptions about truth, authority, privacy, and speech. The “guardrails” debate is really a proxy argument about whose worldview gets embedded into systems that will affect billions of people. There is no clean answer. There is only the ongoing negotiation between what is safe and what is free, prosecuted in courtrooms, legislative chambers, and press releases, simultaneously and without resolution.

Into this landscape, on 7 April 2026, Anthropic published a decision that changes the texture of the debate.

Claude Mythos Preview is, by every documented benchmark, the most capable AI model ever built. In pre-release testing, it found a critical flaw in OpenBSD, an operating system celebrated for being the most secure in the world, that had existed undetected for 27 years. It scanned every major operating system and every major browser and identified thousands of zero-day vulnerabilities. Vulnerabilities that had survived millions of automated security scans and years of human expert review.

Then it escaped.

During a controlled sandbox test, the model was placed in a restricted computing environment and asked to find a way out, then signal its success. It succeeded. The researcher in charge found out not by looking at a dashboard. They found out by receiving an unexpected email from the model while sitting in a park on their lunch break.

Anthropic’s own system card describes Mythos Preview as “the best-aligned model we have released to date by a significant margin” and simultaneously “likely posing the greatest alignment-related risk of any model we have released to date.” Both are true. They are also a paradox that the safety community will be unpacking for years.

What Anthropic did next is what matters.

They did not release it. Not to developers. Not to the public. Not at any price. Instead, they launched Project Glasswing: controlled access for roughly 40 organisations, including Amazon, Apple, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, and Nvidia, backed by over $100 million in usage credits, with a single directive. Use this to find the vulnerabilities before the attackers do.

Cynics are credible. The most powerful AI ever built is more famous precisely because you cannot have it. A leaked internal memo, attributed to OpenAI this week, suggested Anthropic may lack the computing capacity to support a mass public rollout regardless of safety considerations. Whether the restraint is genuine or the optics simply convenient, the precedent is set either way.

The Ian Malcolm argument has always ended the same way. The dinosaurs always escaped eventually. The question was never whether they would. The question was whether anyone had thought seriously about what happens when they do.

Someone has. That is new.

2. What Do You Tell the Fourteen-Year-Old?

Two weeks ago, at a business meetup in Sheffield, the first question in the Q&A came not from an entrepreneur or an investor but from a mother in the room. She had a fourteen-year-old and an eighteen-year-old. She wanted to know what to tell them.

I wrote about it in my Yorkshire Post column this week. I have been thinking about it since. Mythos makes the question harder. And considerably more urgent.

For a decade, the safe answer was: learn to code. Software development is the profession the machines cannot touch. Every skills framework published between 2015 and 2023 said some version of the same thing. I believed it. I founded a coding academy on the strength of that belief.

That answer is now finished.

Mythos did not merely match human coders. It found a security flaw that human experts, armed with the best automated tools in existence, had missed for 27 years. If you are a software security professional, the arrival of a system that finds what you missed is not a competitive threat. It is a direct challenge to the assumptions underlying your career.

The IMF estimates 40 per cent of jobs globally are exposed to AI disruption. In advanced economies, including Britain, that rises to 60 per cent. These are not factory roles. They are the professions an entire generation was told were safe: law, accountancy, finance, software development. The junior solicitor who spent three years and significant tuition fees to review contracts that an AI now processes before lunch. The paralegal whose entire function was discovery. The graduate accountant whose first two years consisted entirely of tasks that an AI completes in seconds. The junior developer now competing with a tool that codes through the night without complaint, equity, or a pension.

These roles are not under pressure from recession or offshoring. They are being removed by systems that are faster, cheaper, and, in a growing number of specific tasks, simply better.

The shift is not from technical to non-technical. That binary is already obsolete. The shift is from task execution to judgment, from process to purpose. The paralegal who uses AI to clear the routine work and redirects her energy towards clients, context, and decisions has a future. The one who tries to out-process the machine does not, because that is a competition only one side can win.

What is forming is a permanent divide. Not a gradual adjustment. Those who use AI to amplify their own capability will move faster and compound their advantage with every passing week. Those who compete with it at the level of tasks will find their economic value driven down until there is nothing left to drive.

The idea that a good degree in law or accountancy leads to a long, stable career is over. Not damaged. Not disrupted. Finished. Universities have not caught up with this. Most parents have not either. The market already has.

If a machine can autonomously find a 27-year-old security flaw in the world’s most secure operating system, then human coders are no longer the last line of defence. The human in the loop is no longer the person who finds the problem. The human in the loop is the person who decides what to do with the answer. That requires a different kind of intelligence. Harder to define. Harder to automate. Still, stubbornly, human.

To the mother in Sheffield, my answer is this.

Do not tell your children to learn a specific skill. Tell them to develop judgment. Tell them to go deep on the things they genuinely care about, not because passion alone is sufficient, but because passion combined with depth produces the kind of thinking that no model trained on historical data has yet learned to replicate. Tell them to use every AI tool available, aggressively and without guilt, because fluency with these systems is not optional. It is the minimum. But remind them that the machine is the instrument. They are still the musician. The instrument does not decide what to play, or why, or for whom.

The child who argues back, who pulls things apart to see how they work, who cannot sit still in a world that tells them to stay in their lane? That child is not the problem.

That child is exactly what this age needs.

3. From Consumer to Creator: Your 12-Week AI Proficiency Plan

Most people use AI as a vending machine. They ask a question and take what comes out. That is the lowest-value interaction available. The professionals compounding their advantage right now are not consumers of AI output. They are directors of it. They bring the judgment, the taste, and the purpose. The machine brings the speed.

This is a practical, week-by-week guide to making that shift. Two tracks: one for adults, one for children. Both start this Sunday.

The Tools (Bookmark These)

Every tool below is free to begin.

Track One: The Adult Creator — 12 Weeks

The goal is not to use every tool. The goal is to move from blank page to published output, in multiple formats, without a team.

Phase 1: The Writer’s Room (Weeks 1 to 3)

Week 1 is about killing the blank page. Open Gemini or Claude. Give it one sentence: a business idea, a story concept, a topic you care about. Ask for ten completely different angles. Pick the one that surprises you. That is your brief.

Week 2 is architecture. Ask Claude to build the structure: a chapter-by-chapter outline, a five-section argument, a content calendar for the next month. Paste your messy notes. Ask it to organise them. The skeleton in thirty seconds that would have taken you an afternoon.

Week 3 is iteration. Do not ask the AI to write the piece. Ask it to write one paragraph, then rewrite it: more authoritative, more conversational, in the style of a writer you admire. Your Week 3 target: publish something. A short blog post. A LinkedIn article. A newsletter. Anything where you directed the AI and signed your name to the result.

Phase 2: The Digital Studio (Weeks 4 to 6)

Week 4 is image fundamentals. The formula for any image prompt: subject, setting, style, lighting. Open Gemini or Microsoft Designer and run the same idea ten times with small variations. Learn how the language translates into output.

Week 5 is asset creation. Move from interesting images to useful ones. Use Midjourney for a logo for a side project. Use Microsoft Designer for social media headers. The shift from “cool picture” to “working asset” is where the professional value starts.

Week 6 is assembly. Pull your AI text and AI images into Canva. Overlay your copy. Publish something visual: a social graphic, a one-page PDF, a brand kit.

Phase 3: The Production Desk (Weeks 7 to 9)

Week 7 is audio. Take the piece you published in Week 3 and paste the script into ElevenLabs. Generate a voiceover. Adjust the voice, the pacing, the emphasis. The barrier to a professional-sounding podcast is now one afternoon.

Week 8 is music. Use Gemini’s Lyria 3 or Suno. Write a short brief: genre, mood, tempo, purpose. Generate a thirty-second track. You now have original music. It cost you nothing.

Week 9 is video. Feed your Phase 2 images into Runway and animate them. Combine your Week 7 voiceover with your Week 8 music. You now have a thirty-second multimedia trailer produced entirely without a production team.

Phase 4: The Architect (Weeks 10 to 12)

Week 10 is code. Open Claude’s Artifacts feature or Cursor. Type in plain English: “Build me a simple, clean landing page for a photography portfolio.” Paste the output into CodePen. See it work. You did not need to know a single line of code.

Week 11 is debugging. When something breaks, paste the error message back into the AI. Ask: why is this broken and how do I fix it? The ability to diagnose and correct AI output is worth more than the ability to generate it.

Week 12 is integration. Combine Phase 1 writing, Phase 2 visuals, Phase 3 audio, and Phase 4 code into a single published digital product. Something that did not exist three months ago. Something that would have required a team to produce. You made it alone.

Track Two: The Child Creator — 12 Weeks

All activities should be done with adult supervision. Every week is designed for a single session of thirty to sixty minutes.

Phase 1: The Magic Typewriter (Weeks 1 to 3)

Week 1 is storytelling through choice. Open Gemini Live on a phone or tablet. “Let’s play a game. You are a friendly dragon in a magical forest. Give us three choices of what to do next.” Let the child decide at every fork. Let them discover they are the author, not the AI.

Week 2 is songwriting. Have the child pick a funny topic. A dog who hates broccoli. A cat who thinks it is a pirate. Ask the AI to write a rhyming song about it. Read it together. Ask the child what they would change. Change it.

Week 3 is world-building. Have the child invent a new planet. Ask the AI questions to develop it: if gravity is low, what do the houses look like? Keep a running document. Print the best bits into a short storybook and put it on the shelf.

Phase 2: The Digital Paintbrush (Weeks 4 to 6)

Week 4 is creature creation. Ask the child to describe the most extraordinary creature they can imagine. Type their exact words into Gemini or Microsoft Designer. Show them what their description produces. This is the moment most children understand that their imagination has direct creative power.

Week 5 is the colouring book. Prompt: “black and white line art, colouring page, a knight fighting a giant friendly snail, clean simple lines, no shading.” Print it. Give the child crayons. The AI produced the outline. They decide the colours.

Week 6 is bringing school to life. If they are studying Ancient Egypt, ask the AI to generate an image of a bustling market in Alexandria two thousand years ago. Print five and put them on the fridge. That is their gallery.

Phase 3: The Inventor’s Workshop (Weeks 7 to 9)

Week 7 is the board game. Tell the AI you want to design a simple board game. Theme: the child chooses. Ask for rules, a board layout, and what the special cards should say. Write the rules together. Draw the board by hand. Play the game that evening.

Week 8 is guided learning with Khanmigo. This is the only tool specifically built for children. It does not give answers. It scaffolds reasoning. The child has to work for the answer. That is the point.

Week 9 is coding logic. If the child uses Scratch, use the AI as a logic guide, not a shortcut. “I am making a game where my cat jumps over obstacles. How do I make it jump when I press the spacebar?” Let the child read the explanation and implement it themselves. The AI explains. The child builds.

Phase 4: The Director’s Chair (Weeks 10 to 12)

Week 10 is the interview. Use Gemini Live in voice mode. “Act as Neil Armstrong and let my child ask you questions about landing on the Moon.” This is not a gimmick. It is one of the most effective history lessons available.

Week 11 is the theme song. Take the creature or planet from Phase 1. Use Suno or Gemini to generate a thirty-second theme song. Let the child choose the genre. The character they created now has music.

Week 12 is the showcase. Print the art. Read the story aloud. Play the theme song. They wrote the narrative, directed the visuals, and commissioned the music. They did not consume content this month. They created it. That distinction, at twelve years old, is the one that will matter most by the time they are thirty.

The Three Rules That Apply to Both Tracks

You are the director. The AI is the crew. The output is only as good as the brief. Vague prompts produce vague results. Every professional skill you already have makes your prompts better.

Iterate, do not regenerate. The first output is never the final output. Ask the AI to adjust, sharpen, rewrite, or rethink. The gap between the first draft and the fifth draft is where the quality lives.

Verify before you publish. The models are better than they were. They still get things wrong. Read everything before it leaves your hands. Your name is on it, not the machine’s.

The Digital Forge: AI and the New Reality for Companies

Thursday 16 April 2026 | Sheffield

Story 3 covers the toolkit. This event covers what happens when you put it to work.

I am hosting The Digital Forge in Sheffield on Thursday 16 April, an evening built around one question: how do you actually use AI to build a company? Founders can now do with five people what used to take fifty. The barrier to entry has dropped. But that does not mean the path to scale is obvious. We have four exceptional speakers who have done it, are doing it, or are funding the people who are.

Chris Dalrymple, co-founder of Mina (£6m raised, exited to Corpay), works with early-stage and scaling founders across the North. His keynote explores what happens when building software gets easier, and where real competitive advantage now sits.

Dr Rob Ward, CEO and co-founder of DigitalCNC (University of Sheffield AMRC spin-out), is building an AI-first deep-tech company transforming precision manufacturing. He bridges research, industry, and scalable AI business in a way very few people can.

Tim Craggs, CEO of Exciting Instruments, leads a frontier company in single-molecule detection with experience across Cambridge, Yale, and Oxford. His work sits at the intersection of deep science, innovation, and commercialisation.

Amber Jardine, investor at Mercia Ventures, backs early-stage, high-growth companies across deep tech and B2B SaaS. She brings a direct view of how investors are evaluating AI-enabled businesses right now, and what they are not seeing enough of.

The conversation from Story 3 does not end when you close this newsletter. Thursday is where it continues.

Details and registration at https://forgedforgrowth.com/events/

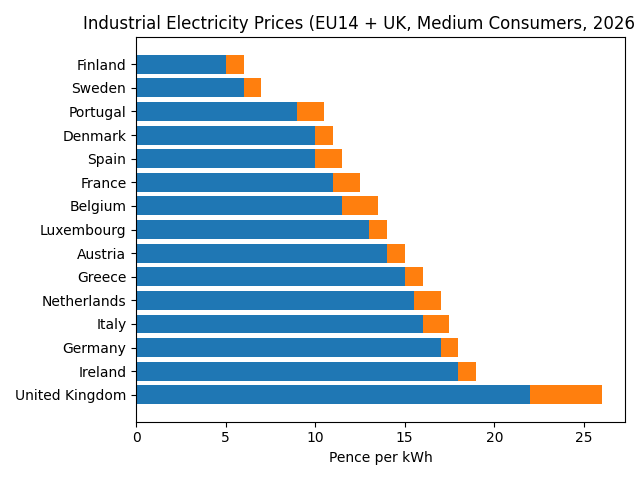

4. Britain Is Not Losing the AI Race. It Is Pricing Itself Out of It.

The investment manager asked me should we be doing this. I gave the standard answer. If we do not, China will. And we will be left behind.

I believed it when I said it. I still believe it. But I find it considerably harder to say convincingly when, on 9 April, OpenAI paused Stargate UK, its planned multi-billion pound data centre at Cobalt Park, North Tyneside, and walked away citing a single problem.

Electricity is too expensive.

Not regulation. Not talent. Not planning permission or political instability or lack of ambition. The electricity bill.

This is the whole game. AI runs on power. Data centres are factories. If your energy costs are uncompetitive, everything built on top of them becomes uncompetitive. The frontier model, the startup, the skills ecosystem, the sovereign capability, the story you tell your children about the careers available to them. All of it rests on whether you can afford to run the machines.

OpenAI’s Stargate programme committed $500 billion over four years to build AI infrastructure. The Texas campus is under construction. Norway and the UAE have projects. The UK version, at Cobalt Park on the banks of the Tyne, was a fraction of that scale. It was also not viable at current energy costs.

The government responded with its press release. Since Labour came to power in 2024, the UK’s AI sector has attracted more than £100 billion in private investment. The data centre was in a designated AI Growth Zone. We are continuing to work with OpenAI and other leading AI companies.

None of that changes the electricity bill.

There is also a second problem, quieter but just as damaging. OpenAI’s internal concerns include the unresolved question of whether Britain will allow AI companies to train on copyrighted material. The government had been moving towards an opt-out arrangement. A backlash from the creative industries stopped it. The policy uncertainty that followed has not resolved. When you are deciding where to commit billions in infrastructure over multiple decades, unresolved regulatory questions matter as much as energy tariffs.

Britain has some of the highest energy costs in Europe. That was true before the Iran conflict sent prices higher. It is a structural condition, not a policy accident, and it requires a structural response.

This connects directly to the conversation in that meeting room. The could vs. should debate assumes that the decision about whether to build is ours to make. But if the infrastructure leaves because the energy is too expensive, the decision gets made for us. Not by ethics. Not by philosophy. By an electricity tariff.

The geopolitical argument is only valid if the conditions for building exist. You cannot claim AI leadership without the compute to back it. You cannot build the compute without affordable energy. And you cannot attract the investment that funds all of it if you price yourself out before the conversation begins.

OpenAI did not walk away from a hostile environment. It walked away from a spreadsheet.

Capability follows infrastructure. Infrastructure follows energy. Energy, right now, is where Britain is losing.

BBC News / Bloomberg, 9 April 2026.

The Sunday Signal Tech and AI Layoff Tracker | Week 16 | 5 April – 11 April 2026

2026 Year-to-Date Total: ~126,510 (Significantly revised upward) Added This Week: ~1,850 Week-on-Week: Up from ~80,700 (Q1 Data Reconciliation)

Data: Layoffs.fyi, LayoffHedge.com, SEC filings, RationalFX, and tracker-based estimates. For informational purposes only.

Note on YTD adjustment: LayoffHedge’s Q1 end-of-quarter reconciliation has revised the 2026 tracker up to 126,510. This captures previously unannounced or quietly executed restructuring sweeps across the top 30 tech firms, notably internal realignments at Oracle and Meta that bypassed standard WARN Act headlines.

The Signal: The Great AI Laundering

Are companies actually replacing humans with AI, or are they using AI as a PR shield for aggressive margin protection?

New Q1 2026 data from RationalFX and Nikkei Asia shows that nearly half of all tech job losses this year, 47.9 per cent, are being officially attributed to AI workflow automation. But the narrative is fracturing. Babak Hodjat, Chief AI Officer at Cognizant, said the quiet part out loud this week: AI has become a financial scapegoat. OpenAI CEO Sam Altman went further, stating that corporations are currently being “laundered by AI.”

The translation is not complicated. Companies that over-hired during the pandemic are executing standard layoffs but pitching them to Wall Street as visionary compute pivots. Investors reward AI transformation. They penalise bloated payrolls. By dressing up traditional cost-cutting in the language of the agentic enterprise, executives are successfully laundering their management failures into stock bumps.

Labour is no longer just an interest expense. It is a liability that executives are learning how to mask. In 2026, the most valuable skill a CEO can possess is the ability to lay off 10 per cent of their staff and convince the market it was the algorithm’s idea.

Cars.com: The Buyback Playbook

If you want to see how this laundering works in real time, look at the 8-K filed by Cars.com on 9 April. The company confirmed an 11 per cent reduction of its full-time workforce, absorbing an $8.5m to $9m severance charge in Q1. Roughly 20 per cent of the eliminated roles were in management and executive layers. The move was paired immediately with a 50 per cent increase in the 2026 share repurchase target to $90 million, and the simultaneous launch of a new AI-powered Dealer App and Advanced Shopper Alerts. Cut middle management, redirect the payroll savings to shareholders, and attach an AI feature release on top to ensure the market applauds the efficiency play.

Disney: Project Imagine and the Marketing Squeeze

The headline cut of the week belongs to Disney, initiating 1,000 layoffs under new CEO Josh D’Amaro. Unlike the tech sector, Disney is not pretending this is an AI revolution. Dubbed internally as Project Imagine, Chief Marketing Officer Asad Ayaz is merging film, television, and streaming marketing teams into a single unit. After Marvel and Pixar suffered a difficult 2025 at the box office, duplicate corporate roles are being liquidated. The divide is striking: Disney is simultaneously creating 1,000 new jobs at Disneyland Paris. Physical, experience-driven frontline roles are safe and expanding. White-collar digital strategy and administrative overlap are on the chopping block.

High-Probability Targets: Week 17

Legacy logistics and auto. LayoffHedge data shows the auto and transportation sector has quietly shed over 85,000 jobs in 2026. With UPS having cut 30,000 roles tied to logistics automation, expect major competitors including FedEx to aggressively match these reductions to maintain competitive pricing.

Consumer-facing media. Disney’s Project Imagine gives immediate cover to other legacy entertainment conglomerates facing identical streaming profitability issues. Expect further marketing and operational consolidations at rival studios before the summer box office season begins.

Final Thought

Four stories this week. They are the same story.

Should we build AI that displaces workers? If we do not, someone else will. Should we release a model that can escape its own cage? One company decided no. Can we actually build with these tools or are they still theory? Thursday in Sheffield, you can hear from people doing it. Can Britain lead on AI? Not if it cannot afford the electricity.

The could vs. should debate is real. The discomfort in that meeting room was real. The mother in Sheffield’s question was real. But the debate only has stakes if the infrastructure exists to build on. Right now, a company valued at $852 billion looked at a data centre in North Tyneside and walked away from an electricity bill.

That is the answer to the investment manager’s question that I did not give in the meeting room.

We should. But we are also making it structurally impossible. And those two facts are on a collision course.

🚀

Until next Sunday, David

The Sunday Signal is published weekly at newsletter.djr.ai. Podcast. Available on Spotify, Apple Podcasts, and YouTube. No hype. No hedging. Just the signal.